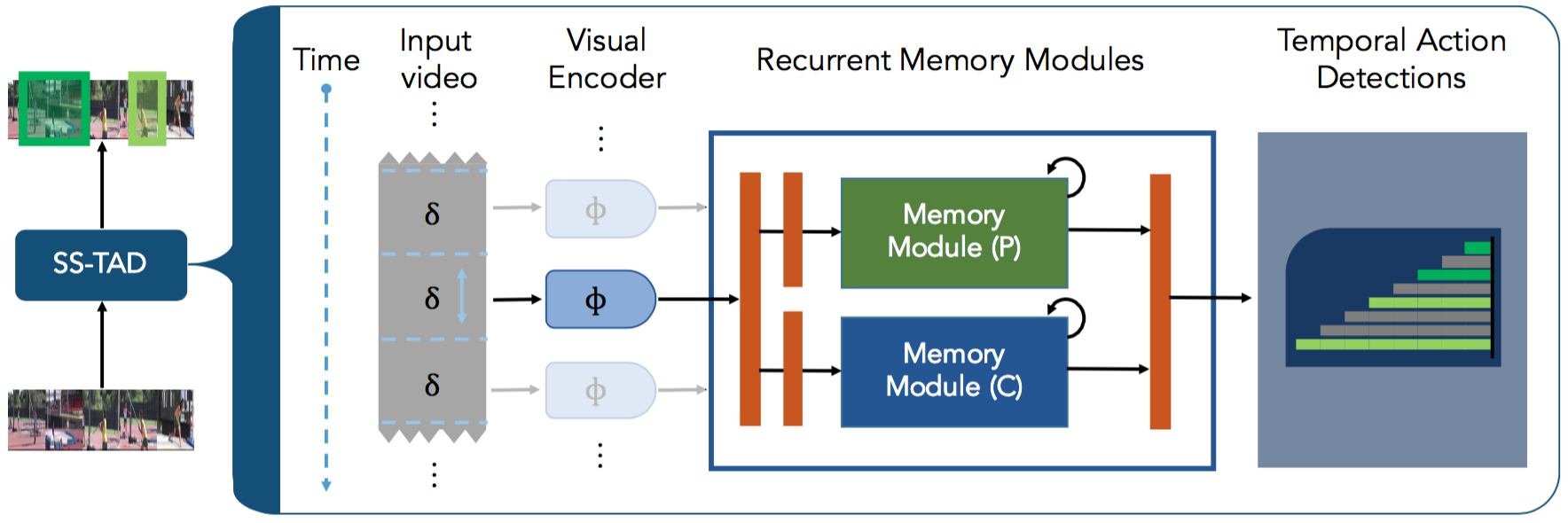

End-to-End, Single-Stream Temporal Action Detection in Untrimmed Videos (SS-TAD)

Welcome to the official repo for "End-to-End, Single-Stream Temporal Action Detection"! This work was presented as an Oral Talk at BMVC 2017 in London.

SS-TAD is a new, efficient model for generating temporal action detections in untrimmed videos. Analogous to object detections for images, temporal action detections provide the temporal bounds in videos where actions of interest may lie in addition to their action classes.

This work builds upon our prior work published at CVPR17 on "SST: Single-Stream Temporal Action Proposals". Now, we are able to provide end-to-end temporal action detection, without requiring a separate classification stage on top of proposals. Furthermore, we observe a significant increase in overall detection performance. For details, please refer to our paper.

Resources

Quick links: [paper] [code] [oral presentation]

Note: Currently, the code in this repo is in pre-release - see code/README.md for details on planned updates.

Please use the following bibtex to cite our work:

@inproceedings{sstad_buch_bmvc17,

author = {Shyamal Buch and Victor Escorcia and Bernard Ghanem and Li Fei-Fei and Juan Carlos Niebles},

title = {End-to-End, Single-Stream Temporal Action Detection in Untrimmed Videos},

year = {2017},

booktitle = {Proceedings of the British Machine Vision Conference ({BMVC})}

}

If you find this work useful, you may also find our prior work of interest: SST proposals github repo